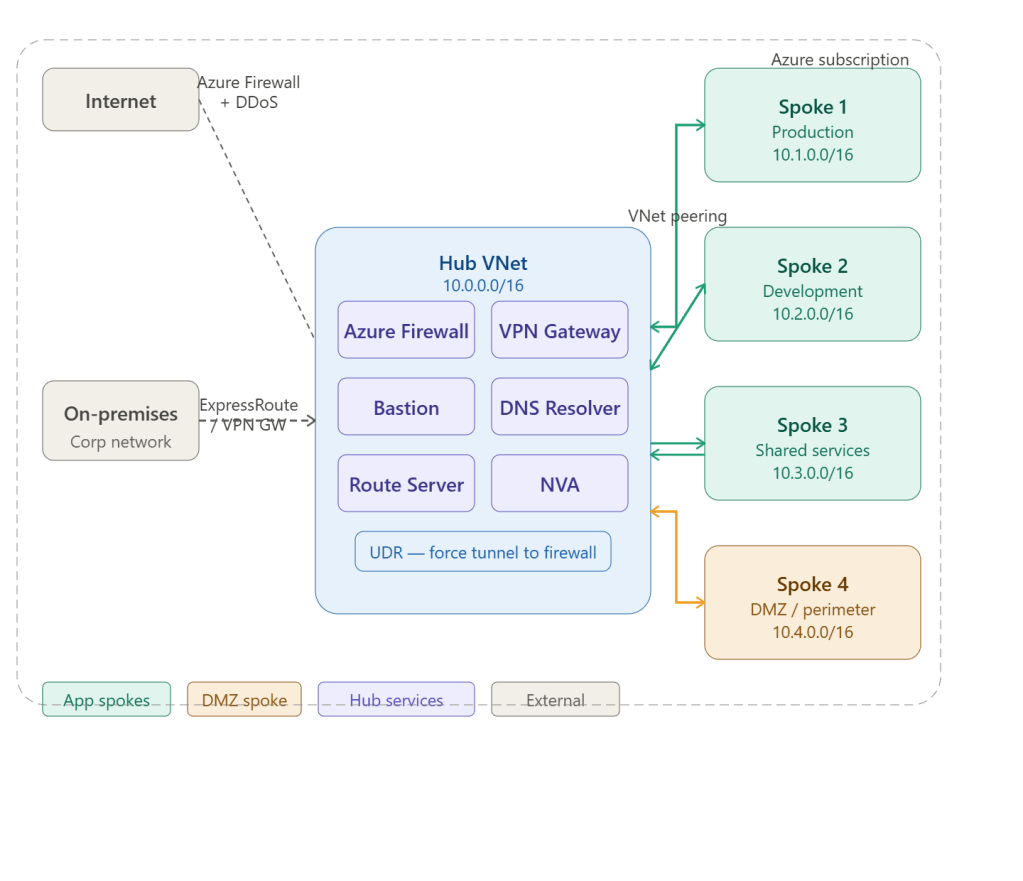

Here’s the Azure Hub and Spoke network architecture — the foundational enterprise network pattern on Azure. I’ll show it in two diagrams: the overall topology first, then the hub internals in detail.

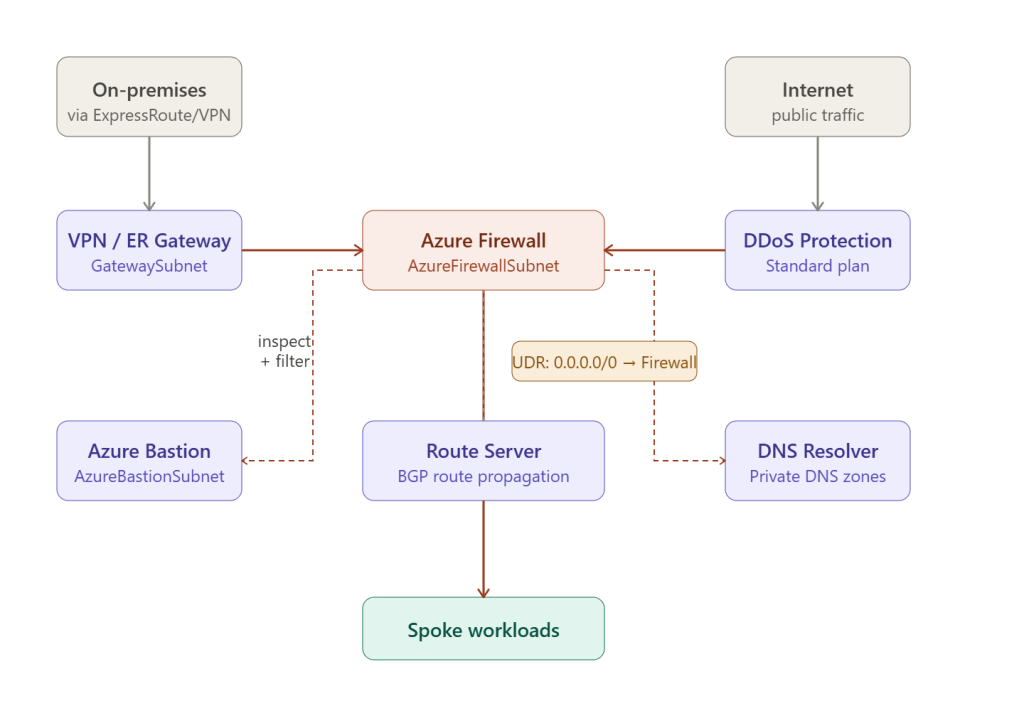

The topology shows the hub as the central control point, with all spoke VNets peered to it. Now here’s a closer look at what lives inside the hub and how traffic flows through it.

Azure Hub and Spoke — Key Design Principles

Why Hub and Spoke?

Hub and spoke is the recommended enterprise network topology for Azure. Instead of each team or workload managing its own connectivity and security, all shared services live in one central hub VNet, and workloads live in isolated spoke VNets peered to it.

Every spoke talks to the world THROUGH the hub — never directly.

The Hub VNet — what lives inside

The hub is the security and connectivity control plane. It contains no workloads — only shared infrastructure:

- VPN Gateway / ExpressRoute Gateway — the on-premises bridge, placed in a dedicated

GatewaySubnet. All hybrid traffic enters and exits here. - Azure Firewall — placed in

AzureFirewallSubnet, it inspects all east-west (spoke-to-spoke) and north-south (internet/on-prem) traffic. Every spoke uses a User Defined Route (UDR) pointing0.0.0.0/0to the firewall’s private IP. - Azure Bastion — placed in

AzureBastionSubnet, it provides browser-based RDP/SSH to any VM in any peered spoke without requiring public IPs on the VMs. - Route Server — exchanges BGP routes with Network Virtual Appliances (NVAs) so dynamic routing updates propagate automatically across all spokes.

- DNS Private Resolver — centralises DNS resolution for all private DNS zones, so every spoke resolves

*.privatelink.azure.comcorrectly through the hub. - DDoS Protection Standard — applied at the subscription level, protects all public IPs across hub and spokes from volumetric attacks.

The Spoke VNets — what lives inside

Each spoke is an isolated workload boundary:

| Spoke | Typical contents | CIDR |

|---|---|---|

| Production | App VMs, AKS, SQL MI, App Service | 10.1.0.0/16 |

| Development | Dev/test workloads, lower SKUs | 10.2.0.0/16 |

| Shared services | Active Directory DCs, monitoring agents | 10.3.0.0/16 |

| DMZ / perimeter | App Gateway, WAF, API Management | 10.4.0.0/16 |

Spokes cannot talk to each other directly — traffic must traverse the hub firewall, giving you full inspection and control of lateral movement.

Traffic flow rules

All routing is forced through the hub firewall via UDRs applied to every spoke subnet:

Spoke VM → UDR (0.0.0.0/0 → Firewall IP) ↓ Azure Firewall (inspect, allow/deny) ↓ Destination (internet / on-prem / other spoke)

This means even spoke-to-spoke traffic — for example, production VM calling a shared services VM — travels hub → firewall → hub → destination, giving you a full audit trail.

Address space planning

Non-overlapping CIDRs are mandatory — VNet peering fails if address spaces overlap:

Hub VNet: 10.0.0.0/16 GatewaySubnet: 10.0.0.0/27 (min /27 for gateway) AzureFirewallSubnet: 10.0.1.0/26 (min /26) AzureBastionSubnet: 10.0.2.0/26 (min /26) RouteServerSubnet: 10.0.3.0/27 (min /27)Spoke 1 (prod): 10.1.0.0/16Spoke 2 (dev): 10.2.0.0/16Spoke 3 (shared svc): 10.3.0.0/16Spoke 4 (DMZ): 10.4.0.0/16

When to use Azure Virtual WAN instead

Hub and spoke with manual VNet peering works well up to ~10 spokes. Beyond that, consider Azure Virtual WAN — a Microsoft-managed hub that automatically handles routing, peering, and gateway scaling across dozens of spokes and multiple regions, at the cost of less customisation flexibility.