Here’s a practical banking chatbot HLD on Azure with the three things you asked for: components, data flow, and security controls.

1. Scope and goals

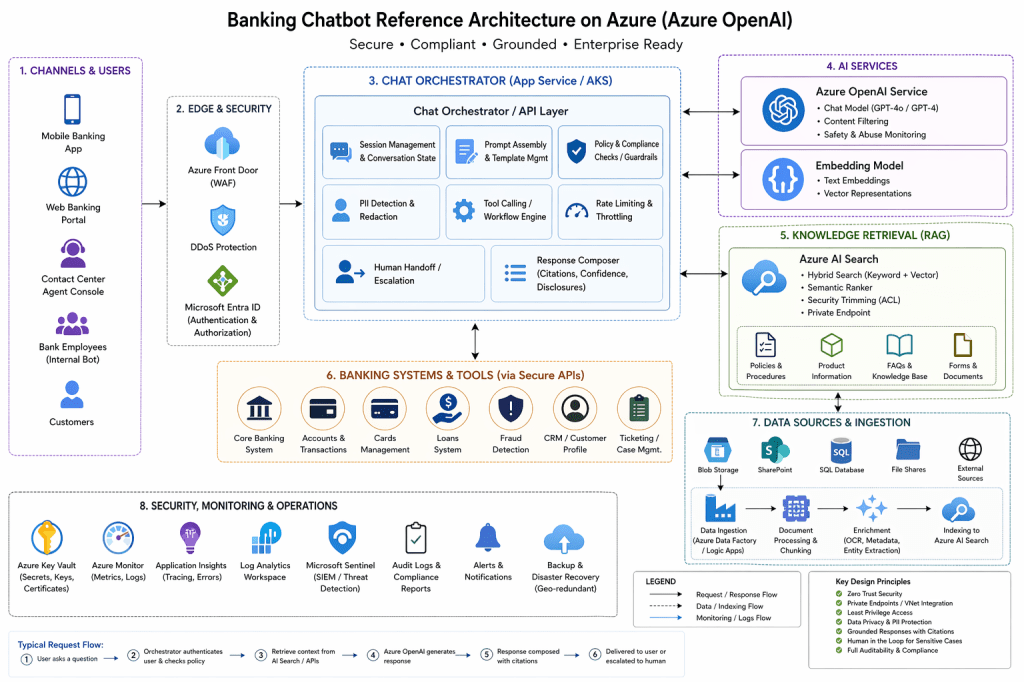

This design is for a banking chatbot that can answer grounded questions, retrieve approved internal knowledge, perform tightly controlled actions through backend APIs, and escalate sensitive cases to a human. On Azure, the common enterprise pattern is an application/orchestration layer in front of Azure OpenAI and Azure AI Search, with private networking and identity controls around the whole path. (Microsoft Learn)

2. High-level architecture

Customers / Employees ↓Web / Mobile / Contact Center UI ↓API Gateway / WAF ↓Chat Orchestrator (App Service or AKS) ├─ Auth / session / rate limits ├─ Prompt assembly ├─ Policy & compliance checks ├─ PII redaction ├─ Tool calling / workflow engine ├─ Confidence scoring └─ Human handoff ↓+----------------------+----------------------+----------------------+| | | |v v vAzure OpenAI Azure AI Search Banking APIs / Systems(chat + (hybrid RAG) (accounts, cards, CRM, embeddings) ticketing, fraud, etc.) \ | / \ | / \ v / \ Enterprise data / \ (Blob, SharePoint, SQL) / +-----------------------------+Supporting:- Microsoft Entra ID- Azure Key Vault- Azure Monitor / App Insights / Log Analytics- Microsoft Sentinel- Private Endpoints / VNet Integration

Microsoft’s current baseline enterprise chat architecture uses a secured app layer in front of model and retrieval services, and Azure AI Search is the recommended grounding layer for RAG with hybrid retrieval options. (Microsoft Learn)

3. Core components

A. Channels

The chatbot can be exposed through mobile banking, web banking, employee portal, or contact-center console. The UI should not call the model directly; it should go through a backend orchestrator so the bank can enforce policy, logging, and authorization centrally. That backend-first pattern is part of Microsoft’s baseline architecture. (Microsoft Learn)

B. API gateway / edge

Put a gateway and WAF in front of the chatbot for TLS termination, request filtering, DDoS protection, and traffic governance. This is consistent with Microsoft’s baseline Azure web/chat reference designs, which assume a secured edge in front of the application layer. (Microsoft Learn)

C. Chat orchestrator

This is the main control layer. It manages:

- authentication and session state

- prompt templates

- retrieval requests

- business-rule checks

- tool calling to banking APIs

- confidence scoring

- citations/disclosures

- escalation to humans

Microsoft’s enterprise chat reference architecture explicitly separates this orchestration layer from the model and data stores. (Microsoft Learn)

D. Azure OpenAI

Use Azure OpenAI for:

- chat generation

- embeddings for retrieval

Azure documents content filtering and abuse monitoring for Azure OpenAI / Azure Direct Models, which makes it suitable for enterprise guardrail layers, though that does not replace your own banking-specific controls. (Microsoft Learn)

E. Azure AI Search

Use Azure AI Search as the RAG layer for policies, product docs, SOPs, FAQs, forms, and knowledge articles. Azure AI Search supports hybrid retrieval with keyword, vector, and semantic ranking, plus chunking/enrichment patterns for PDFs and images. It also supports document-level security trimming patterns. (Microsoft Learn)

F. Enterprise data sources

Typical sources are:

- Blob Storage

- SharePoint

- SQL / Cosmos-style operational data stores

- document repositories

- internal policy systems

These sources should feed an ingestion pipeline that extracts, chunks, enriches, and indexes content into Azure AI Search. Microsoft’s RAG guidance calls out chunking, OCR, document extraction, and enrichment as core parts of the pattern. (Microsoft Learn)

G. Banking systems / tools

The orchestrator should call approved backend APIs, not let the model talk directly to core banking systems. Examples:

- account summary API

- card freeze/unfreeze API

- loan status API

- CRM/ticketing API

- fraud escalation workflow

This is an architectural recommendation rather than a Microsoft product rule, but it follows the same control-layer pattern in Microsoft’s baseline chat architecture. (Microsoft Learn)

4. End-to-end data flow

Flow 1: Knowledge question

Example: “What is the fee for an international wire?”

- User sends a message from mobile/web.

- Gateway forwards it to the orchestrator.

- Orchestrator authenticates the user and applies policy checks.

- Orchestrator sends a retrieval query to Azure AI Search.

- Azure AI Search returns grounded chunks and metadata.

- Orchestrator builds the prompt with citations and instructions.

- Azure OpenAI generates the answer.

- Orchestrator adds disclosure text and returns the response.

This is a standard RAG pattern: retrieve first, then generate with grounded context. Azure AI Search documentation explicitly describes this model. (Microsoft Learn)

Flow 2: Action request

Example: “Freeze my debit card.”

- User sends the request.

- Orchestrator authenticates and checks entitlements.

- Orchestrator classifies this as an action, not just Q&A.

- Orchestrator optionally uses the model to interpret intent.

- Orchestrator calls the bank’s card-management API.

- Backend system performs the action.

- Orchestrator returns a confirmed result or escalates if needed.

The key design principle is that the model can help interpret intent, but the backend system remains the source of truth and enforcement. This is an architectural best practice built on the app-layer separation Microsoft recommends. (Microsoft Learn)

Flow 3: Sensitive or low-confidence case

Example: fraud complaint, legal complaint, hardship, uncertain answer.

- Orchestrator detects a sensitive topic or low confidence.

- It blocks automated completion or limits the response.

- It routes the case to a human banker/contact-center agent.

- Logs and case metadata are stored for audit.

Human handoff is not a single Azure feature, but it is a recommended enterprise control pattern for regulated use cases where accuracy and accountability matter. Azure’s baseline architecture supports orchestrator-driven workflow and escalation patterns. (Microsoft Learn)

5. Security controls

Identity and access

Use Microsoft Entra ID for workforce identities and your customer identity layer for retail users. For retrieval, use identity-aware filtering and document-level access trimming so users only retrieve content they are allowed to see. Microsoft documents Entra-based auth and Azure AI Search security trimming for this purpose. (Microsoft Learn)

Private networking

Use private endpoints and VNet integration for Azure OpenAI, Azure AI Search, Key Vault, and the application tier where possible. Microsoft’s baseline chat architecture emphasizes private connectivity, and Key Vault supports Private Link integration. (Microsoft Learn)

Secrets and keys

Store secrets, certificates, and encryption keys in Azure Key Vault. Key Vault is designed for secure storage of secrets, keys, and certificates and supports logging and integration with Azure Monitor. (Microsoft Learn)

Managed identities

Prefer managed identities between Azure services instead of hard-coded secrets. Microsoft documents managed-identity-based authentication for Key Vault and uses passwordless patterns in Azure application architectures. (Microsoft Learn)

Content safety

Use Azure OpenAI’s built-in content filtering and abuse monitoring, but treat those as baseline controls rather than your only compliance layer. Banking-specific policies still belong in the orchestrator. Azure documents both abuse monitoring and configurable harm categories/severity concepts. (Microsoft Learn)

Data protection

Minimize prompt data, redact unnecessary PII before model calls, and keep regulated records in approved systems of record. Azure publishes data privacy/security information for Azure Direct Models, including Azure OpenAI. (Microsoft Learn)

Monitoring and audit

Send logs, traces, and security events to Azure Monitor / Application Insights / Log Analytics, and use Microsoft Sentinel for SIEM/SOC workflows. Key Vault also supports exporting logs to Azure Monitor. (Microsoft Learn)

6. Non-functional requirements

Availability

Deploy the app tier with redundancy and design for zone/region resilience where needed. Microsoft’s baseline chat architecture is explicitly aimed at secure, highly available, zone-redundant enterprise chat applications. (Microsoft Learn)

Scalability

Scale the stateless app/orchestrator tier horizontally, keep chat history in a dedicated store, and scale search/model capacity independently. This separation follows the Azure reference pattern where the app, model, and retrieval tiers are distinct. (Microsoft Learn)

Auditability

Every model call, retrieval event, tool call, and escalation path should be logged with correlation IDs. This is a design recommendation built on Azure’s monitoring stack and the needs of regulated environments. (Microsoft Learn)

7. Recommended deployment split

For a bank, split into two bots:

Customer bot

- narrow scope

- strict action permissions

- early human escalation

- only approved public/customer-facing knowledge

Employee copilot

- broader internal knowledge

- document-level access trimming

- workflow tools for CRM and case systems

- stronger audit controls

This split is an architectural recommendation because customer-facing and employee-facing risk profiles are usually different, while Azure’s identity and retrieval controls support both models. (Microsoft Learn)

8. HLD summary table

| Layer | Main components | Purpose | Key controls |

|---|---|---|---|

| Channels | Mobile, web, contact center, employee portal | User interaction | Auth, session controls |

| Edge | API gateway, WAF | Secure entry point | TLS, DDoS, request filtering |

| App tier | Orchestrator on App Service/AKS | Prompting, policy, tool calling, handoff | Rate limits, PII redaction, audit |

| AI tier | Azure OpenAI | Response generation, embeddings | Content filtering, abuse monitoring |

| Retrieval tier | Azure AI Search | Grounding and citations | Hybrid search, ACL/security trimming |

| Data tier | Blob, SharePoint, SQL, docs | Knowledge sources | Access control, ingestion governance |

| Systems tier | Core banking APIs, CRM, fraud, cards | Trusted actions and transactions | API auth, least privilege |

| Security/ops | Entra ID, Key Vault, Monitor, Sentinel | Identity, secrets, monitoring | Private endpoints, logging, SIEM |

The Azure-specific parts of this table are grounded in Microsoft’s official architecture, retrieval, identity, and Key Vault guidance. (Microsoft Learn)

9. Best one-line design

Use a secure orchestrator in front of Azure OpenAI and Azure AI Search, ground policy answers with RAG, route actions through approved banking APIs, and enforce identity, private networking, secrets management, logging, and human escalation throughout. (Microsoft Learn)