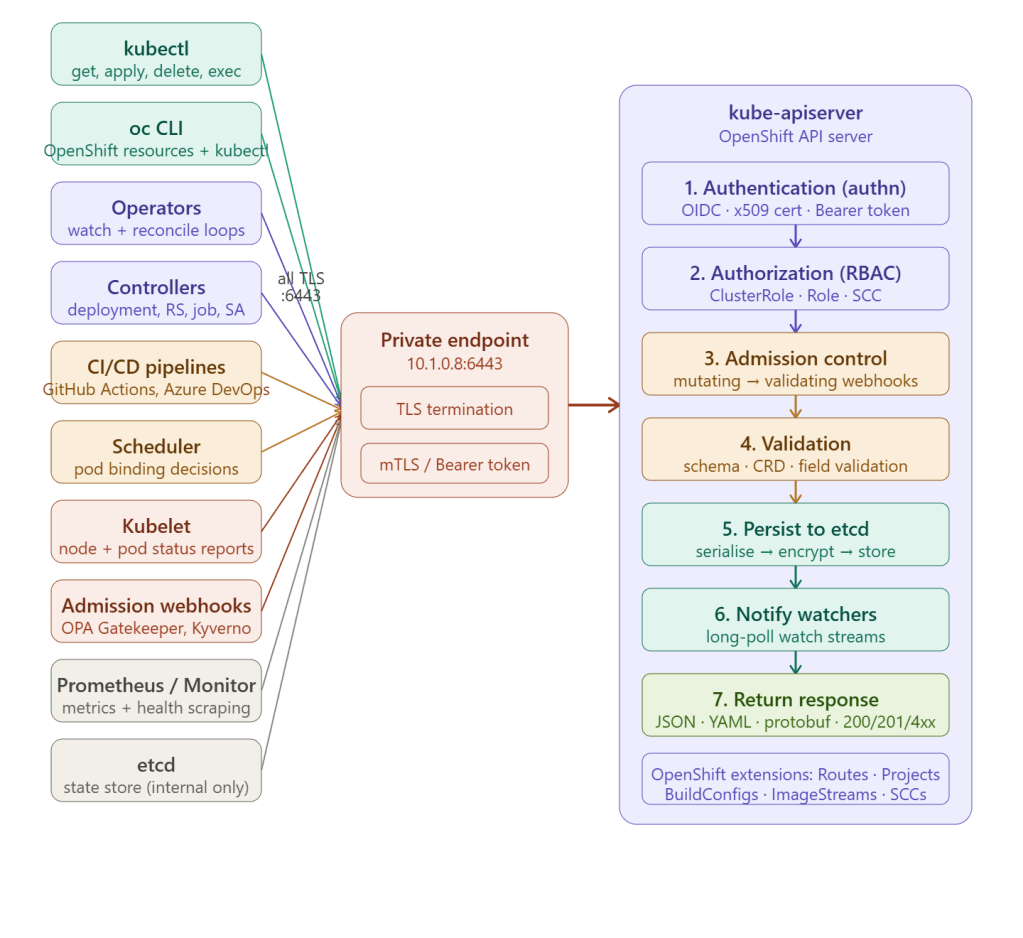

Kubernetes API Operations Through the ARO Private Endpoint

Every interaction with an ARO cluster — whether from a human, a tool, or an automated controller — flows through a single TCP connection to port 6443 on the API server private endpoint. The API server is the absolute centre of gravity for all cluster operations.

Every Operation Is a REST Call

The Kubernetes API server exposes a RESTful HTTP/2 API over TLS. Every tool — kubectl, oc, operators, kubelet — translates its work into one of five HTTP verbs against a resource path:

GET /api/v1/namespaces/payments/pods list podsGET /api/v1/namespaces/payments/pods/web-1 get single podPOST /api/v1/namespaces/payments/pods create podPUT /api/v1/namespaces/payments/pods/web-1 replace podPATCH /api/v1/namespaces/payments/pods/web-1 partial updateDELETE /api/v1/namespaces/payments/pods/web-1 delete podGET /api/v1/namespaces/payments/pods?watch=1 watch stream

Every one of these travels as TLS-encrypted HTTP/2 to 10.1.0.8:6443.

Category 1 — Human CLI Operations (kubectl + oc)

kubectl — standard Kubernetes operations

# Every one of these becomes a REST call through the private endpoint# LIST pods → GET /api/v1/namespaces/default/podskubectl get pods -n payments# CREATE deployment → POST /apps/v1/namespaces/payments/deploymentskubectl apply -f deployment.yaml# EXEC into pod → POST + UPGRADE to SPDY/WebSocketkubectl exec -it web-1 -- /bin/bash# PORT-FORWARD → POST + WebSocket tunnelkubectl port-forward svc/my-app 8080:80# LOGS → GET /api/v1/namespaces/payments/pods/web-1/logkubectl logs web-1 --follow# WATCH resources → GET with ?watch=1 (long-lived streaming connection)kubectl get pods --watch

oc CLI — OpenShift-specific additions

oc wraps kubectl completely and adds calls to OpenShift-specific API groups:

# OpenShift Route → POST /apis/route.openshift.io/v1/namespaces/.../routesoc expose svc/my-app# Project (OpenShift namespace wrapper)# → POST /apis/project.openshift.io/v1/projectrequestsoc new-project my-team# ImageStream → GET /apis/image.openshift.io/v1/namespaces/.../imagestreamsoc get imagestreams# BuildConfig → POST /apis/build.openshift.io/v1/namespaces/.../buildsoc start-build my-app# DeploymentConfig (legacy OpenShift resource)# → GET /apis/apps.openshift.io/v1/namespaces/.../deploymentconfigsoc rollout latest dc/my-app# SCC inspection → GET /apis/security.openshift.io/v1/securitycontextconstraintsoc get scc

Category 2 — Operators and Controllers

Operators are long-running processes inside the cluster that maintain perpetual watch connections to the API server — the busiest category of API consumers by connection count.

The watch loop — how operators work

// Every operator runs this pattern against the API server// Connection: persistent HTTP/2 stream to 10.1.0.8:6443// 1. LIST — get current state (one-time at startup)GET /apis/apps/v1/namespaces/payments/deployments→ Returns: all deployments + resourceVersion: 48291// 2. WATCH — subscribe to changes (permanent long-poll)GET /apis/apps/v1/namespaces/payments/deployments?watch=1&resourceVersion=48291→ Server keeps connection open indefinitely→ Pushes events as they occur: {"type":"MODIFIED","object":{"metadata":{"name":"web"},...}} {"type":"ADDED","object":{"metadata":{"name":"worker"},...}} {"type":"DELETED","object":{"metadata":{"name":"old"},...}}// 3. RECONCILE — when event received, fix actual → desired statePATCH /apis/apps/v1/namespaces/payments/replicasets/web-abc→ Creates/deletes pods to match desired replicas// 4. STATUS UPDATE — write observed state backPATCH /apis/apps/v1/namespaces/payments/deployments/web/status→ {"observedGeneration": 5, "availableReplicas": 3}

Built-in OpenShift operators that run this loop continuously

| Operator | What it watches | What it does |

|---|---|---|

openshift-apiserver-operator | apiservers.config.openshift.io | Manages API server config and certs |

cluster-version-operator | clusterversions.config.openshift.io | Drives cluster upgrades |

machine-config-operator | machineconfigs, machineconfigpools | Applies RHCOS config to nodes |

ingress-operator | ingresses.config.openshift.io | Manages router deployments |

dns-operator | dnses.config.openshift.io | Manages CoreDNS config |

network-operator | networks.config.openshift.io | Manages OVN-Kubernetes |

image-registry-operator | configs.imageregistry.operator.openshift.io | Manages internal registry |

authentication-operator | authentications.config.openshift.io | Manages OAuth server |

Every one of these has persistent watch connections open to the API server at all times — a healthy ARO cluster typically has 40–80 active watch streams running 24/7.

Category 3 — Kubelet (Node Agent)

Every worker node runs a kubelet process that maintains its own connection to the API server — reporting node health and receiving pod assignments:

Worker node kubelet → 10.1.0.8:6443Outbound (kubelet → API server): POST /api/v1/nodes/worker-1/status every 10 seconds — node heartbeat PATCH /api/v1/namespaces/app/pods/web-1/status when pod state changes POST /api/v1/events kubelet events (OOM, image pull)Inbound (API server → kubelet port 10250): GET https://worker-1:10250/exec/... kubectl exec forwarding GET https://worker-1:10250/log/... kubectl logs forwarding GET https://worker-1:10250/metrics Prometheus scraping

If the kubelet loses its connection to the API server for more than the node-monitor-grace-period (default 40 seconds), the node is marked NotReady and pods begin eviction.

Category 4 — CI/CD Pipelines

Self-hosted CI/CD runners inside the VNet authenticate to the API server using a service account token:

# Service account for CI/CD — scoped to specific namespaceapiVersion: v1kind: ServiceAccountmetadata: name: cicd-deployer namespace: payments---apiVersion: rbac.authorization.k8s.io/v1kind: Rolemetadata: name: deployer namespace: paymentsrules: - apiGroups: ["apps"] resources: ["deployments", "replicasets"] verbs: ["get", "list", "create", "update", "patch"] - apiGroups: [""] resources: ["pods", "services", "configmaps"] verbs: ["get", "list", "create", "update", "patch"]---apiVersion: rbac.authorization.k8s.io/v1kind: RoleBindingmetadata: name: cicd-deployer-binding namespace: paymentsroleRef: kind: Role name: deployersubjects: - kind: ServiceAccount name: cicd-deployer namespace: payments

GitHub Actions pipeline using this service account:

- name: Deploy to ARO run: | # Authenticate with service account token — all traffic to 10.1.0.8:6443 oc login ${{ secrets.ARO_API_URL }} \ --token ${{ secrets.CICD_SA_TOKEN }} # Each command = REST call through private endpoint oc set image deployment/web \ web=acrprod.azurecr.io/my-app:${{ github.sha }} \ -n payments oc rollout status deployment/web -n payments

Category 5 — Admission Webhooks

Admission webhooks add an external hop during the API server request pipeline — the API server calls out to your webhook service before persisting any object:

kubectl apply -f pod.yaml ↓API server receives POST /api/v1/namespaces/payments/pods ↓Authn + RBAC pass ↓Mutating admission webhook: API server → POST https://gatekeeper-webhook.gatekeeper-system.svc:443/mutate Webhook adds labels, sets resource limits, injects sidecars → Returns mutated pod spec ↓Validating admission webhook: API server → POST https://gatekeeper-webhook.gatekeeper-system.svc:443/validate Checks policy: must have resource limits, no root, valid image registry → Returns: allowed: true (or denied with reason) ↓Persist to etcd → notify watchers → return 201 Created

Common admission webhooks in ARO:

| Webhook | Purpose |

|---|---|

| OPA Gatekeeper | Policy enforcement — block non-compliant resources |

| Kyverno | Policy as code — mutate, validate, generate |

| Istio / OpenShift Service Mesh | Inject Envoy sidecar into pods automatically |

| Red Hat ACM | Multi-cluster governance policies |

| Cert-manager | Inject TLS certificates into resources |

Category 6 — Monitoring and Observability

# Prometheus scrapes API server metrics via the API endpointGET https://10.1.0.8:6443/metrics# Returns: apiserver_request_total, apiserver_request_duration_seconds,# etcd_request_duration_seconds, workqueue_depth, ...# Health endpoints checked by Azure ARO service monitorGET https://10.1.0.8:6443/healthz → "ok"GET https://10.1.0.8:6443/readyz → "ok"GET https://10.1.0.8:6443/livez → "ok"# OpenShift console reads cluster state continuouslyGET /apis/config.openshift.io/v1/clusterversions/versionGET /api/v1/namespaces?limit=500GET /apis/project.openshift.io/v1/projects

The Request Pipeline — What Happens Inside

Every request through the private endpoint traverses this exact pipeline inside kube-apiserver:

TLS handshake on 10.1.0.8:6443 ↓1. AUTHENTICATION — who are you? • OIDC token (Entra ID) → extract user + groups • x509 client cert → extract CN as username • Bearer token → look up service account • Failure → 401 Unauthorized2. AUTHORIZATION (RBAC) — are you allowed? • Check: user + groups + verb + resource + namespace • ClusterRoleBinding / RoleBinding lookup • OpenShift SCC evaluation for pods • Failure → 403 Forbidden3. ADMISSION CONTROL — is this allowed by policy? • Mutating webhooks (modify the object) • Built-in admission plugins (ResourceQuota, LimitRanger) • Validating webhooks (accept or reject) • Failure → 400/403 with reason4. VALIDATION — is the object schema correct? • OpenAPI schema validation • CRD schema validation • Field immutability checks • Failure → 422 Unprocessable Entity5. PERSIST TO etcd • Serialise to protobuf • Encrypt at rest (AES-GCM, ARO managed) • Write to etcd with optimistic concurrency (resourceVersion) • Failure → 409 Conflict (resourceVersion mismatch)6. NOTIFY WATCHERS • Push event to all active watch streams matching the resource • Controllers, operators, scheduler, kubelet all receive notification7. RETURN RESPONSE • 200 OK (GET) • 201 Created (POST) • 200 OK with updated object (PATCH/PUT) • 404 Not Found • Streaming response for watch/exec/logs/port-forward

API Groups — Kubernetes vs OpenShift

The API server serves two parallel API surfaces — Kubernetes core APIs and OpenShift extension APIs — all through the same 10.1.0.8:6443 endpoint:

Kubernetes core APIs: /api/v1/ pods, services, configmaps, secrets, nodes /apis/apps/v1/ deployments, replicasets, statefulsets, daemonsets /apis/batch/v1/ jobs, cronjobs /apis/rbac.authorization.k8s.io/ clusterroles, rolebindings /apis/storage.k8s.io/ storageclasses, persistentvolumes /apis/networking.k8s.io/ ingresses, networkpoliciesOpenShift extension APIs: /apis/route.openshift.io/ routes (OpenShift ingress primitive) /apis/project.openshift.io/ projects (namespace + RBAC wrapper) /apis/build.openshift.io/ buildconfigs, builds /apis/image.openshift.io/ imagestreams, imagestreamtags /apis/apps.openshift.io/ deploymentconfigs (legacy) /apis/security.openshift.io/ securitycontextconstraints /apis/config.openshift.io/ cluster-wide config (DNS, network, auth) /apis/operator.openshift.io/ operator configuration resources /apis/machine.openshift.io/ machines, machinesets (MachineAPI)

Key Takeaway

The ARO API server private endpoint at 10.1.0.8:6443 is not just the entry point for human CLI commands — it is the nervous system of the entire cluster. Every automated process — the 40+ built-in OpenShift operators maintaining cluster state, every kubelet heartbeating from every worker node every 10 seconds, every CI/CD deployment, every admission webhook validation, every Prometheus health check — flows through this single TLS endpoint. Making it private eliminates the internet attack surface entirely, while the seven-stage request pipeline inside the API server ensures every operation is authenticated, authorised, policy-checked, validated, and durably persisted before any response is returned.