Private ARO Cluster

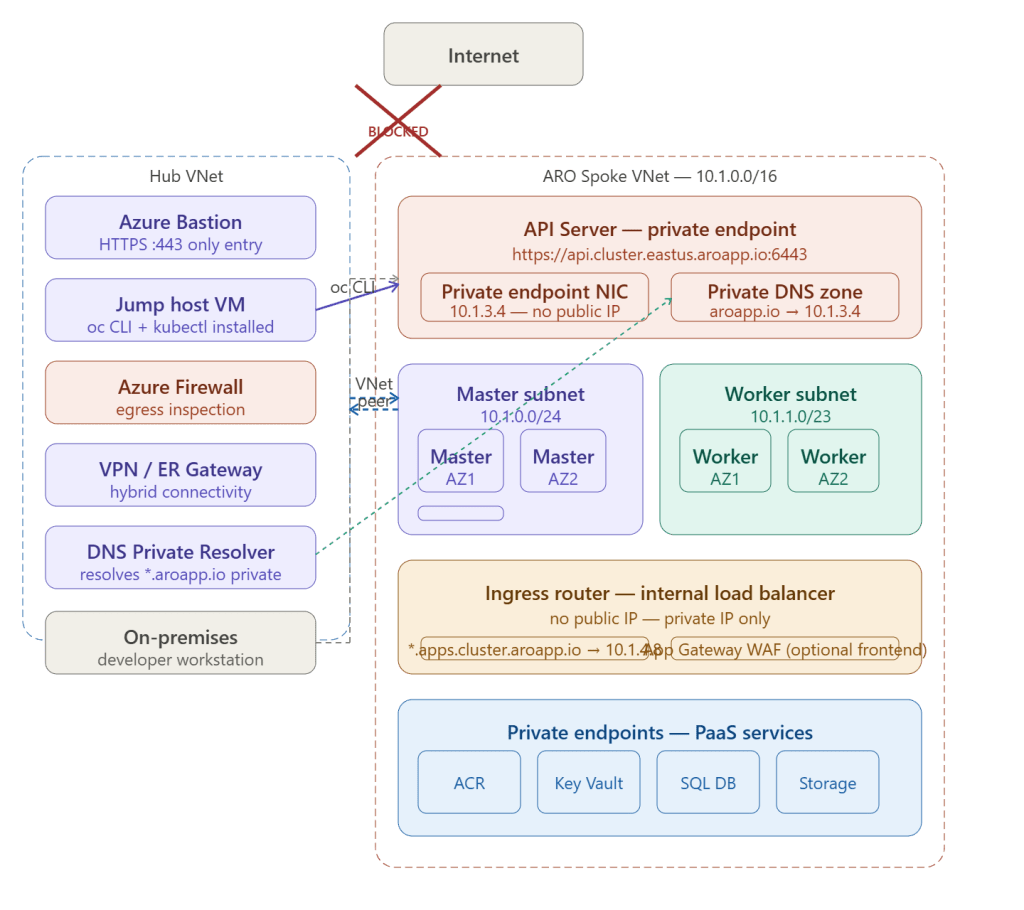

A private ARO cluster removes all public IP addresses from both the Kubernetes API server and the ingress router — making the cluster completely unreachable from the internet. Every connection to the cluster must travel over Azure’s private network backbone via VNet peering, ExpressRoute, or VPN.

Public vs Private ARO — What Changes

| Component | Public cluster | Private cluster |

|---|---|---|

| API server endpoint | Public IP + DNS | Private endpoint IP only |

| Ingress router | Public load balancer | Internal load balancer |

| Worker node IPs | Private (always) | Private (always) |

| Master node IPs | Private (always) | Private (always) |

| Access method | Any internet browser | VPN / ER / Bastion only |

| DNS resolution | Public DNS | Private DNS zone |

| Attack surface | API port 6443 exposed | Zero public exposure |

How the API Server Is Hidden — The Mechanism

When you deploy a private ARO cluster, Azure does three things automatically:

1. API Server gets a Private Endpoint NIC

Instead of a public load balancer frontend, the API server is exposed exclusively through a private endpoint — a NIC in your VNet subnet with a private IP:

Public cluster:

api.cluster.eastus.aroapp.io → 20.x.x.x (public IP)

Anyone on internet can reach :6443

Private cluster:

api.cluster.eastus.aroapp.io → 10.1.3.4 (private IP in your VNet)

Only reachable from within the VNet or peered networks

The private endpoint is deployed into your ARO master subnet automatically during cluster creation. No public IP is allocated.

2. A Private DNS Zone Is Created Automatically

ARO creates a private DNS zone linked to your VNet so the API server FQDN resolves to the private endpoint IP:

Private DNS zone: cluster.eastus.aroapp.io

A record: api → 10.1.3.4

A record: *.apps → 10.1.4.8 (ingress internal LB)

Linked to: ARO spoke VNet + hub VNet

This means any VM in a peered VNet can resolve api.cluster.eastus.aroapp.io and get 10.1.3.4 — no public DNS lookup ever occurs.

3. Ingress Router Gets an Internal Load Balancer

The OpenShift ingress router (which handles *.apps.cluster.aroapp.io routes) is fronted by an Azure Internal Load Balancer with a private frontend IP:

Public cluster: *.apps → Azure Public LB → 20.x.x.x

Private cluster: *.apps → Azure Internal LB → 10.1.4.8

Applications running on the cluster are only reachable from inside the VNet or connected networks.

Deploying a Private ARO Cluster

# 1. Create resource group and VNet

az group create --name rg-aro --location eastus

az network vnet create \

--resource-group rg-aro \

--name aro-spoke-vnet \

--address-prefixes 10.1.0.0/16

# 2. Create master subnet — disable private endpoint network policies

az network vnet subnet create \

--resource-group rg-aro \

--vnet-name aro-spoke-vnet \

--name master-subnet \

--address-prefixes 10.1.0.0/24 \

--disable-private-link-service-network-policies true # ← required for ARO

# 3. Create worker subnet

az network vnet subnet create \

--resource-group rg-aro \

--vnet-name aro-spoke-vnet \

--name worker-subnet \

--address-prefixes 10.1.1.0/23

# 4. Deploy private ARO cluster

az aro create \

--resource-group rg-aro \

--name aro-prod \

--vnet aro-spoke-vnet \

--master-subnet master-subnet \

--worker-subnet worker-subnet \

--apiserver-visibility Private \ # ← API server private

--ingress-visibility Private \ # ← ingress private

--master-vm-size Standard_D8s_v3 \

--worker-vm-size Standard_D16s_v3 \

--worker-count 3 \

--pull-secret @pull-secret.txt

# Takes ~35 minutes to complete

The Three Access Paths

Path 1 — Azure Bastion + Jump Host (most common)

The simplest pattern — a small Linux VM in the hub VNet with oc and kubectl installed, accessed securely via Bastion:

# 1. Admin opens Azure portal → connects via Bastion to jump-host-vm

# 2. On jump host — get cluster credentials

az aro list-credentials \

--resource-group rg-aro \

--name aro-prod

# Output:

# kubeadminPassword: "XXXXX-XXXXX-XXXXX-XXXXX"

# kubeadminUsername: "kubeadmin"

# 3. Get API server URL

API_URL=$(az aro show \

--resource-group rg-aro \

--name aro-prod \

--query apiserverProfile.url -o tsv)

# 4. Login — works because jump host is in peered VNet

oc login $API_URL \

--username kubeadmin \

--password XXXXX-XXXXX-XXXXX-XXXXX

# 5. Verify

oc get nodes

oc get clusterversion

Path 2 — ExpressRoute / VPN from on-premises

On-premises developers access the private API server directly from their workstations — but DNS must be configured to resolve the ARO private DNS zone:

On-premises developer workstation ↓Corporate DNS server: api.cluster.eastus.aroapp.io ↓ conditional forward to Azure DNS Private Resolver (10.0.5.4)Azure DNS Private Resolver ↓ linked private DNS zone: aroapp.io → 10.1.3.4Returns: 10.1.3.4Developer runs: oc login https://api.cluster.eastus.aroapp.io:6443Traffic travels: workstation → MPLS → ER Gateway → hub VNet peering → ARO spoke → API server

On-premises DNS server conditional forwarder:

Zone: aroapp.ioForward to: 10.0.5.4 (DNS Private Resolver inbound endpoint)

Path 3 — CI/CD Pipeline (GitHub Actions / Azure DevOps)

For automated deployments, pipelines must also reach the private API server. Use a self-hosted runner inside the VNet:

# GitHub Actions — self-hosted runner in hub VNet

name: Deploy to ARO

on: [push]

jobs:

deploy:

runs-on: self-hosted # ← runner VM inside Azure VNet

steps:

- uses: actions/checkout@v4

- name: Login to ARO

run: |

oc login ${{ secrets.ARO_API_URL }} \

--token ${{ secrets.ARO_SERVICE_ACCOUNT_TOKEN }}

- name: Deploy application

run: |

oc apply -f k8s/

oc rollout status deployment/my-app

The self-hosted runner is a VM in the hub VNet — it can resolve the private API server DNS and reach port 6443 over VNet peering.

Private DNS — The Critical Detail

After cluster creation, ARO automatically creates a private DNS zone. You must link this zone to every VNet that needs to resolve the API server — including the hub VNet where your jump host and DNS Private Resolver live:

# ARO creates this automatically — linked to ARO spoke VNet

# You must manually link it to the hub VNet

PRIVATE_ZONE=$(az network private-dns zone list \

--resource-group $(az aro show -g rg-aro -n aro-prod \

--query clusterProfile.resourceGroupId -o tsv | tr -d '\n') \

--query "[?contains(name,'aroapp.io')].name" -o tsv)

# Link to hub VNet

az network private-dns link vnet create \

--resource-group <aro-managed-rg> \

--zone-name $PRIVATE_ZONE \

--name link-to-hub-vnet \

--virtual-network $(az network vnet show \

--resource-group rg-hub \

--name hub-vnet --query id -o tsv) \

--registration-enabled false

Without this link, VMs in the hub VNet cannot resolve api.cluster.eastus.aroapp.io — DNS queries fall through to public DNS which returns NXDOMAIN for a private cluster.

Entra ID (AAD) Integration for Developer Access

Replace the kubeadmin local account with Entra ID authentication — developers log in with their corporate credentials:

# Configure AAD OAuth on ARO

az aro update \

--resource-group rg-aro \

--name aro-prod \

--client-id <app-registration-client-id> \

--client-secret <app-registration-secret>

# Grant cluster-admin to an AAD group

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: aro-cluster-admins

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: Group

apiGroup: rbac.authorization.k8s.io

name: <aad-group-object-id> # e.g. Platform Engineering team

---

# Grant view-only to a developer group

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: dev-view

namespace: my-app

roleRef:

kind: ClusterRole

name: view

subjects:

- kind: Group

name: <dev-aad-group-object-id>

Developers now login via:

oc login $API_URL # redirects to Microsoft login page# Enter corporate credentials → MFA → issued a token

Egress from a Private Cluster

A private cluster still needs outbound internet access for Red Hat image registries and update servers. Force all egress through Azure Firewall via UDR on both subnets:

# Route table for master and worker subnets

az network route-table create \

--resource-group rg-aro-network \

--name rt-aro-subnets

az network route-table route create \

--resource-group rg-aro-network \

--route-table-name rt-aro-subnets \

--name force-to-firewall \

--address-prefix 0.0.0.0/0 \

--next-hop-type VirtualAppliance \

--next-hop-ip-address 10.0.1.4 # Azure Firewall private IP

# Associate with master subnet

az network vnet subnet update \

--resource-group rg-aro \

--vnet-name aro-spoke-vnet \

--name master-subnet \

--route-table rt-aro-subnets

# Associate with worker subnet

az network vnet subnet update \

--resource-group rg-aro \

--vnet-name aro-spoke-vnet \

--name worker-subnet \

--route-table rt-aro-subnets

Required Azure Firewall FQDN allow rules for private ARO:

quay.io # Red Hat image registry

registry.redhat.io # Red Hat registry

registry.access.redhat.com # RHEL content

cdn.quay.io # CDN for quay

*.blob.core.windows.net # Azure storage (etcd backups, images)

*.servicebus.windows.net # ARO monitoring

management.azure.com # Azure ARM API

login.microsoftonline.com # Entra ID auth

Key Takeaway

A private ARO cluster achieves zero public attack surface by replacing the public API server load balancer with a VNet-internal private endpoint, and replacing the public ingress load balancer with an internal one. DNS resolution of both endpoints stays entirely within Azure’s private network. The only access paths are Azure Bastion for interactive access, ExpressRoute or VPN for on-premises connectivity, and self-hosted CI/CD runners for automation — all travelling over encrypted private paths without a single packet touching the public internet.