What is RAG?

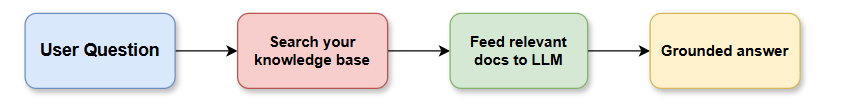

Retrieval-Augmented Generation (RAG) = give an LLM access to your private data at query time, so it answers based on your documents — not just its training data.

User Question → Search your knowledge base → Feed relevant docs to LLM → Grounded Answer

GCP-Native RAG Architecture (Full Stack)

┌─────────────────────────────────────────────────────────────┐│ USER INTERFACE ││ (Web App / Slack Bot / Internal Portal) │└──────────────────────┬──────────────────────────────────────┘ ↓┌─────────────────────────────────────────────────────────────┐│ API LAYER ││ Cloud Run / Cloud Functions │└──────┬───────────────┬──────────────────┬───────────────────┘ ↓ ↓ ↓┌────────────┐ ┌─────────────┐ ┌──────────────────┐│ Retrieval │ │ LLM Layer │ │ Auth & Security ││ Engine │ │ (Vertex AI)│ │ (IAM / IAP) │└────────────┘ └─────────────┘ └──────────────────┘ ↓┌─────────────────────────────────────────────────────────────┐│ VECTOR STORE ││ Vertex AI Vector Search / AlloyDB / pgvector │└──────────────────────┬──────────────────────────────────────┘ ↓┌─────────────────────────────────────────────────────────────┐│ KNOWLEDGE BASE (Raw Docs) ││ GCS Buckets │ BigQuery │ Drive │ Confluence │ Jira │└─────────────────────────────────────────────────────────────┘

GCP Services Mapping

| RAG Component | GCP Service |

|---|---|

| Document Storage | Cloud Storage (GCS) |

| Embedding Model | Vertex AI Embeddings (text-embedding-005) |

| Vector Store | Vertex AI Vector Search or AlloyDB pgvector |

| LLM | Vertex AI Gemini 1.5 Pro / Flash |

| Orchestration | Cloud Run, Cloud Functions, or Vertex AI Pipelines |

| Document parsing | Document AI |

| Data ingestion pipeline | Dataflow / Cloud Composer (Airflow) |

| Metadata & structured data | BigQuery |

| Auth & access control | IAM, Identity-Aware Proxy (IAP) |

| Monitoring | Cloud Logging, Cloud Monitoring, Vertex AI Model Monitoring |

| Secret management | Secret Manager |

Phase 1 — Document Ingestion Pipeline

[ Raw Documents ]GCS / Drive / Confluence / SharePoint ↓[ Document AI ] ← OCR, form parsing, table extraction ↓[ Chunking & Cleaning ] ← Split into ~512 token chunks with overlap ↓[ Vertex AI Embeddings ] ← text-embedding-005 → vector per chunk ↓[ Vector Store ]Vertex AI Vector Search (managed) or AlloyDB + pgvector (flexible) ↓[ Metadata → BigQuery ] ← source, timestamp, doc_id, chunk_id

Chunking Strategy (Critical for Quality)

| Strategy | Best for |

|---|---|

| Fixed size (512 tokens, 20% overlap) | General documents |

| Semantic chunking | Mixed-content docs |

| Sentence-level | FAQs, support docs |

| Section/header-based | Structured docs (manuals, wikis) |

| Parent-child chunking | Retrieve child, return parent context |

Phase 2 — Retrieval Engine

# Simplified RAG retrieval flow on GCPdef retrieve(query: str, top_k: int = 5): # 1. Embed the user query query_embedding = vertexai_embed(query) # text-embedding-005 # 2. Vector similarity search results = vector_search.find_neighbors( embedding=query_embedding, num_neighbors=top_k ) # 3. Optional: Re-rank results reranked = rerank(query, results) # Vertex AI Ranking API # 4. Fetch full chunk text from GCS / BigQuery chunks = fetch_chunks(reranked) return chunks

Retrieval Techniques (Use in Combination)

| Technique | What it does |

|---|---|

| Dense retrieval | Vector similarity (semantic search) |

| Sparse retrieval | BM25 keyword search |

| Hybrid search | Dense + sparse combined (best quality) |

| Re-ranking | Vertex AI Ranking API re-orders top results |

| HyDE | LLM generates hypothetical answer → embed that for retrieval |

| Multi-query retrieval | LLM generates N query variants → retrieve for all |

Phase 3 — Generation (LLM Layer)

def generate_answer(query: str, chunks: list): context = "\n\n".join([c.text for c in chunks]) prompt = f""" You are an internal AI assistant for Acme Corp. Answer ONLY based on the provided context. If the answer is not in the context, say "I don't have that information." Always cite the source document. CONTEXT: {context} QUESTION: {query} ANSWER: """ response = gemini_pro.generate_content(prompt) return response.text

Gemini Models on Vertex AI

| Model | Best for |

|---|---|

| Gemini 1.5 Pro | Complex reasoning, long documents (1M context) |

| Gemini 1.5 Flash | Fast, cost-efficient responses |

| Gemini 1.0 Pro | Simpler Q&A tasks |

| Claude on Vertex | Alternative via Model Garden |

Phase 4 — API & Serving Layer

Cloud Run (containerized FastAPI) ├── POST /chat → RAG query endpoint ├── POST /ingest → Trigger document ingestion ├── GET /sources → List indexed documents └── GET /health → Health check

Cloud Run is ideal because:

- Serverless, scales to zero

- Fast cold starts

- Easy CI/CD via Cloud Build

- Integrates with IAP for auth

Phase 5 — Internal AI Assistant UI

Options for the frontend:

| Option | Best for |

|---|---|

| Cloud Run + React/Next.js | Custom internal portal |

| Slack Bot | Teams already using Slack |

| Google Chat Bot | Google Workspace shops |

| Vertex AI Agent Builder | No-code, managed RAG UI |

| Looker / Data Studio embed | Analytics-heavy teams |

Enterprise-Grade Features

1. Access Control (Critical)

IAM Roles → control who can call the RAG APIIAP → protect the web UI (Google SSO)Document-level ACL → filter retrieved chunks by user's permissionsVPC Service Controls → isolate all GCP services in a perimeter

2. Observability Stack

Cloud Logging → all query logs, errorsCloud Monitoring → latency, throughput, error rate dashboardsBigQuery → store all Q&A pairs for analysisVertex AI Evals → measure answer quality over time

3. Guardrails

Vertex AI Safety Filters → block harmful outputsGrounding checks → ensure answer comes from retrieved contextConfidence scoring → flag low-confidence answers for human reviewCitation enforcement → always return source doc + page

Full GCP RAG Stack — Production Setup

┌─ INGESTION (Batch + Real-time) ──────────────────────────────┐│ Cloud Composer (Airflow) → Document AI → Embeddings → VectorDB│└──────────────────────────────────────────────────────────────┘┌─ SERVING ────────────────────────────────────────────────────┐│ Cloud Run (FastAPI RAG service) ││ ├── Vertex AI Vector Search (retrieval) ││ ├── Vertex AI Ranking API (re-rank) ││ └── Gemini 1.5 Pro (generation) │└──────────────────────────────────────────────────────────────┘┌─ FRONTEND ───────────────────────────────────────────────────┐│ Next.js on Cloud Run + IAP (Google SSO) ││ or Slack / Google Chat Bot │└──────────────────────────────────────────────────────────────┘┌─ OBSERVABILITY ──────────────────────────────────────────────┐│ Cloud Logging → BigQuery → Looker Dashboard │└──────────────────────────────────────────────────────────────┘

Vertex AI Agent Builder (Managed RAG — Fastest Path)

If you want to skip building from scratch, GCP offers a fully managed RAG solution:

- Upload docs to GCS

- Create a Data Store in Agent Builder

- Create an Agent and attach the data store

- Deploy — get a chat UI + API instantly

Great for POCs and internal tools where customization isn’t critical.

Cost Optimization Tips

| Tip | Saving |

|---|---|

| Use Gemini Flash for simple Q&A | ~10x cheaper than Pro |

| Cache frequent queries (Memorystore/Redis) | Reduce LLM calls |

| Batch embed documents overnight | Lower embedding costs |

Limit top_k retrieval chunks | Reduce context = less tokens |

| Use committed use discounts on Vertex | Up to 20% off |

RAG Quality Evaluation

Always measure these metrics:

| Metric | What it measures |

|---|---|

| Faithfulness | Is the answer grounded in retrieved docs? |

| Answer Relevance | Does it actually answer the question? |

| Context Precision | Are retrieved chunks relevant? |

| Context Recall | Did retrieval find all needed info? |

Tools: RAGAS framework, Vertex AI Evaluation Service, custom BigQuery dashboards.

Timeline for Enterprise RAG on GCP

| Phase | Timeline | Deliverable |

|---|---|---|

| POC | 1–2 weeks | Agent Builder + sample docs |

| MVP | 4–6 weeks | Cloud Run RAG API + basic UI |

| Production | 8–12 weeks | Full pipeline, auth, monitoring |

| Optimization | Ongoing | Eval loop, fine-tuning, cost control |

This is a battle-tested architecture used by enterprises running internal knowledge assistants, HR bots, IT support agents, and compliance Q&A systems on GCP.